Now that SharePoint Online CSOM is now works with .NET Framework, I thought I would put together a demo using Visual Studio code that connects to SharePoint.

A previous colleague and still good friend of mine Vardhaman Deshpande in June wrote a blog post showing how to connect to SharePoint Online using MSAL. It is so well written that really writing another blog about it seems a little pointless, so I have taken his blog a little further by connecting to a KeyVault in Azure and grabbing the certificate directly from there.

.NET Standard CSOM of SharePoint Online now uses OAuth for authentication. This means an Access Token needs to be grabbed and passed to every call that is made to SharePoint Online. We will do this by grabbing the AppID and Certificate from the KeyVault and then get the Access Token through ConfidentialClientApplicationBuilder. The Access Token will then be passed into the ClientContext so all calls are made with the Access Token to SharePoint Online.

Walk-through Demo

The demo I have put together can be found at my GitHub repository. By using a Azure AD App Registration and a client certificate, I will walk-through the steps here to set up the following:

- Create a Resource Group in Azure

- Create a KeyVault

- Create a Certificate and Store it in the KeyVault

- Create an Azure AD App registration

- Store the ClientID in the KeyVault Secrets

- Grant Azure AD Application permission for SharePoint – Sites.FullControl.All

- Console code that connects to SharePoint Online.

Setup

To perform all the steps above as a manual walk-through would take a lot of time to go through. Also, where I can automate things I do. Therefore in the GitHub project under the PowerShell Folder there is a PowerShell file called Install-AzureEnvironment.ps1.

This uses AZ Cli and running the below script will create the above for you in your Azure environment. Replace “Contso” with the name of your tenant.

The above will create the following Azure Resources (using the example of Consto as tenant name)

- Resource Group: Contso-SharePointMSAL

- App Registration: Contso-SharePointMSAL (Granted with SharePoint > Sites.FullControl.All)

- Key Vault: Contso-SharePointMSAL (Will be truncated to 24 characters if longer)

- CertificateName stored in KeyVault Certificates: Contso-SharePointMSAL

- ClientId stored in KeyVault Secret: ConstoSharePointMSAL

Console Application

Using Visual Studio Code, I’ve create a .NET Core 3.1 console application and added the following nuget packages. Please see my previous blog post “Basic dotnet commands to create C# project in Visual Studio Code” on how to create a Console application and add nuget packages.

- Microsoft.SharePointOnline.CSOM

- Used for the SharePoint CSOM calls

- Microsoft.Identity.Client

- Used for OAuth authentication

- Azure.Identity

- Used for KeyVault authentication

- Azure.Security.KeyVault.Secrets

- Used for getting the Secret and Certificate from the vault

- Microsoft.Extensions.Configuration

- Used for collecting app.config values

- Microsoft.Externsions.Configuration.FileExtensions

- Used for collecting app.config values

- Microsoft.Extensions.Configuration.Json

- Used for collecting app.config values

Next you will need to create (or update if cloned the github project) the appsettings.json file. Replace the environment and site values for your environment.

Then update the program.cs file with the following code. The code has been written assuming your Azure resources using the .\Install-AzureEnvironment.ps1.

After the code runs it will display the site Title. In my case ‘TestAPISite’.

The important piece of code to get the certificate from Azure Key Vault is GetAppOnlyCertificate function on line 78. This is using the new Azure.Identity and Azure.Security.KeyVault.Secrets libraries.

The Azure.Identity information can be found here: https://docs.microsoft.com/en-us/dotnet/api/overview/azure/identity-readme?view=azure-dotnet

The key to authentication to the KeyVault is on line 82 using DefaultAzureCredential, as this attempts to connect using different authentication methods. Once connected, it retrieves the certificate value and creates a X509Certificate2 certificate in memory. The only confusing part of the code is using Azure.Security.KeyVault.Secrets to get the value not Azure.Security.KeyVault.Certificates.

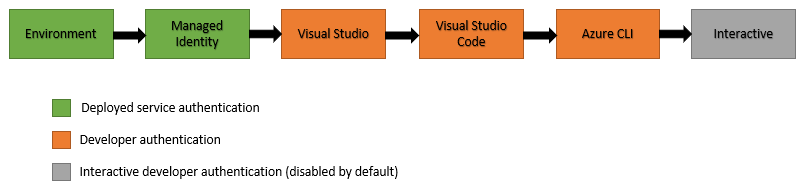

The image below taken from the Microsoft documentation, shows how the DefaultAzureCredential will attempt to authenticate via the following mechanisms in order.

- Environment – The DefaultAzureCredential will read account information specified via environment variables and use it to authenticate.

- Managed Identity – If the application is deployed to an Azure host with Managed Identity enabled, the DefaultAzureCredential will authenticate with that account.

- Visual Studio – If the developer has authenticated via Visual Studio, the DefaultAzureCredential will authenticate with that account.

- Visual Studio Code – If the developer has authenticated via the Visual Studio Code Azure Account plugin, the DefaultAzureCredential will authenticate with that account.

- Azure CLI – If the developer has authenticated an account via the Azure CLI az login command, the DefaultAzureCredential will authenticate with that account.

- Interactive – If enabled the DefaultAzureCredential will interactively authenticate the developer via the current system’s default browser.

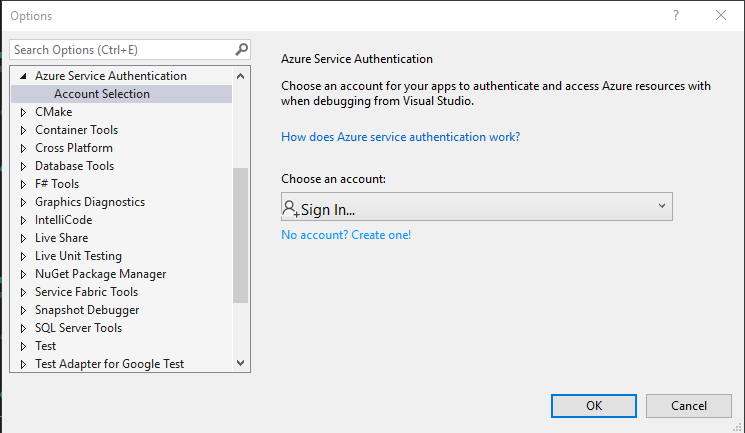

Note: When I first ran my code on Visual Studio Code, I kept getting an authentication issue, it was because I had Visual Studio Enterprise installed on my machine and it was picking up the authentication method selected there which was pointing to a different tenant. You can see this in Visual Studio Enterprise by going into Tools > Options

The great thing about DefaultAzureCredential, is that if this code was within a Azure Function, I could run it first on my computer, then deploy it to Azure Functions with a Managed Identity, and it would still work without any changes to the code.

I hope you find this blog post useful.